Fine-tuning models like LLaMA 3, Mistral, and Falcon has become increasingly efficient, thanks to advancements in machine learning techniques. This guide provides an intermediate-level tutorial on the process of fine-tuning a LLaMA 3 model, complete with a demonstration. It is recommended that readers have a foundational understanding of Generative Pretrained Transformers before proceeding. To execute the demo, a sufficiently powerful NVIDIA GPU is required.

Prerequisites

Before starting with the fine-tuning process, ensure you have the following:

- A foundational understanding of machine learning and neural networks.

- Experience with Python programming and familiarity with libraries such as PyTorch or TensorFlow.

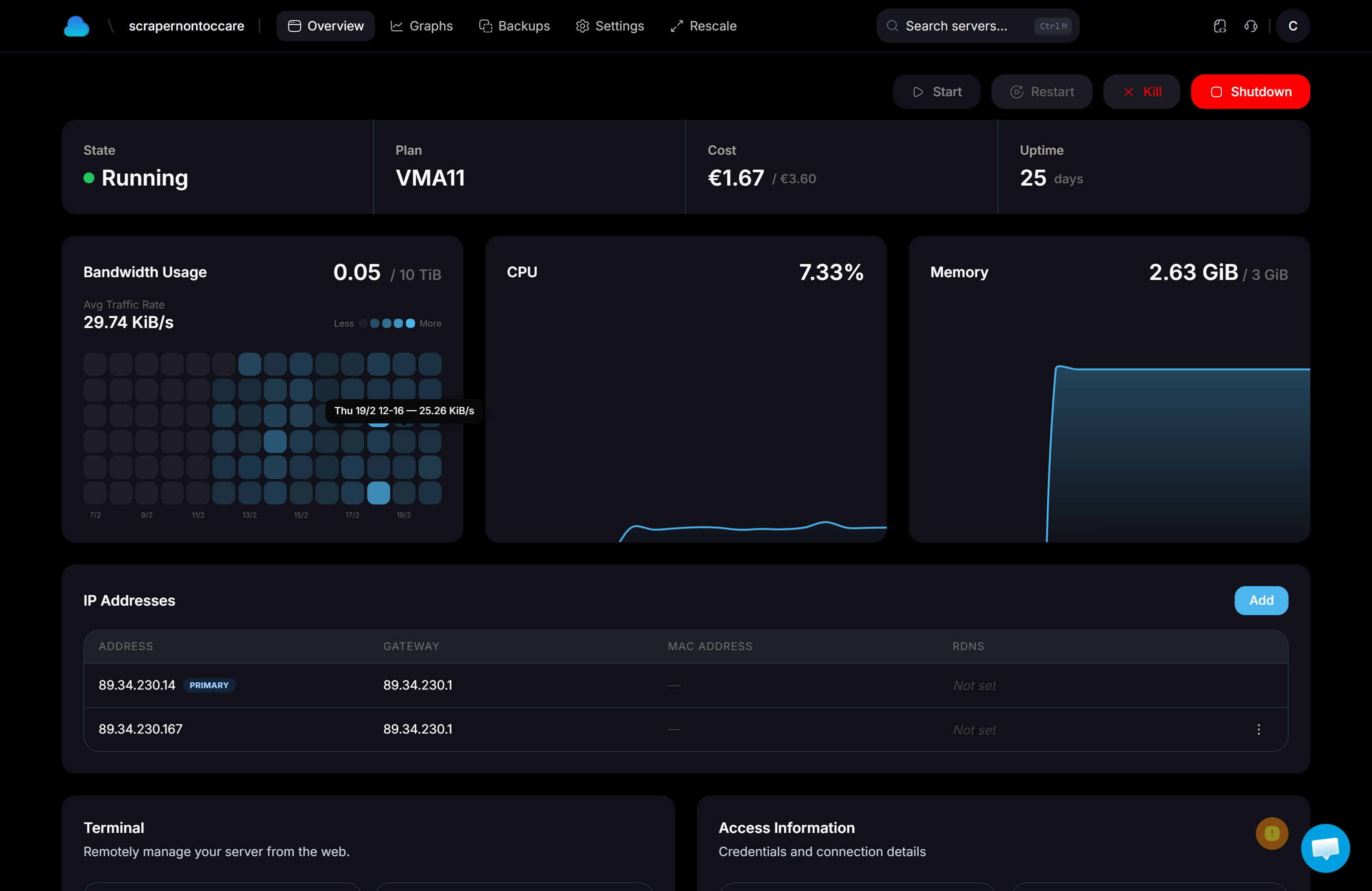

- Access to a system with a powerful NVIDIA GPU.

Setting Up the Environment

To begin fine-tuning LLaMA 3, ensure your environment is equipped with the necessary hardware and software. Multi-GPU setups can significantly enhance the training process. For instance, using multiple GPUs like 4x3090s can help manage larger models such as LLaMA 3-70B, which may not fit on a single GPU.

First, set up your Python environment and install the necessary libraries:

pip install torch transformersEnsure your GPUs are properly configured and available for use:

nvidia-smiTechniques and Tools

Several techniques and tools can be employed for fine-tuning. For example, QLORA is a method used to fine-tune models on two GPUs in parallel, although it requires careful handling to avoid errors related to model precision. Additionally, FSDP (Fully Sharded Data Parallel) can be utilized to parallelize training across multiple GPUs, enabling the fine-tuning of models like Meta LLaMA 8B on a single node.

Example of using FSDP:

from torch.distributed.fsdp import FullyShardedDataParallel as FSDP # Initialize your model and wrap it in FSDP model = FSDP(model) Advanced Fine-Tuning Methods

For those looking to explore advanced fine-tuning methods, the ORPO technique combines Supervised Fine-Tuning (SFT) and Reinforcement Learning from Human Feedback (RLHF) for preference alignment. This approach is particularly useful in a multi-GPU environment. Moreover, the SWIFT method offers an alternative for efficient multi-GPU training, covering aspects from setting up the training environment to model quantization.

Consider incorporating techniques like:

- Gradient checkpointing to save memory.

- Model quantization for faster inference.

Challenges and Considerations

While fine-tuning LLaMA 3 models, it's important to consider the limitations of certain tools. For instance, the Unsloth library, despite its efficiency, currently supports only single-GPU settings, which may not be ideal for all users.

Be mindful of:

- GPU memory limitations.

- Model precision and performance trade-offs.

In conclusion, fine-tuning LLaMA 3 involves a combination of setting up the right environment, choosing appropriate techniques, and understanding the limitations of available tools. With the right approach, you can effectively enhance the performance of your LLaMA 3 model.